Auto-Captions: Limitations of Automated Speech Recognition

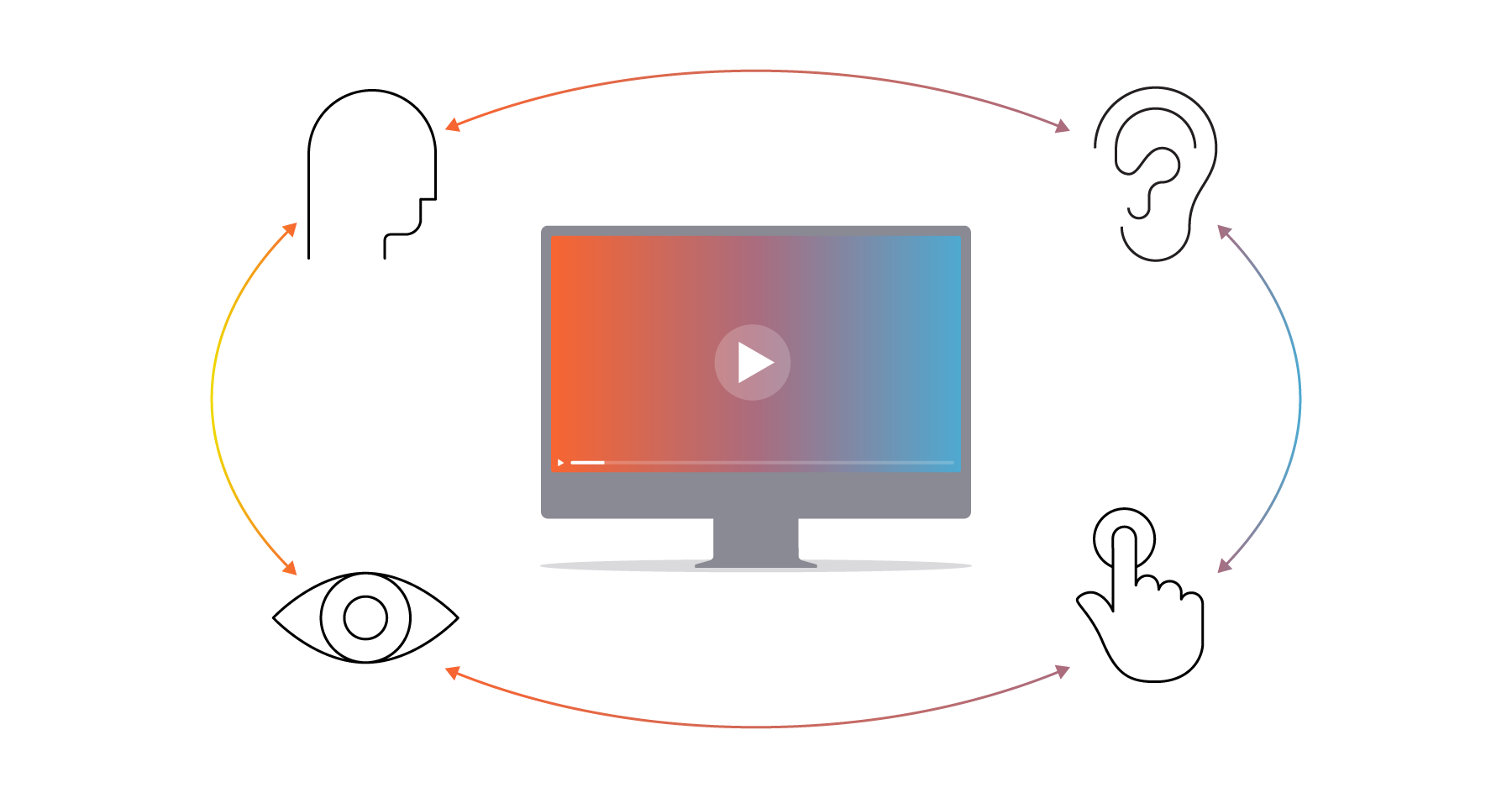

Media

The rise of generative artificial intelligence (GAI) has taken the world by storm, finding applications in personal and professional spheres alike. In the captioning industry, GAI can be used in the process of automatic speech recognition (ASR), which converts spoken language into written text. While ASR technology has never been more accurate than it is today, our research shows that even the best engines perform below industry standards. This means humans are still a mainstay in producing high-quality, accessible captions.

The Accuracy of ASR Engines

In the captioning world, accuracy rates are used to gauge the precision and quality of a caption file or transcript. Since accuracy is crucial to providing a truly equitable accommodation for d/Deaf and hard of hearing audiences, the industry standard for minimum acceptable caption accuracy is 99%.

When measuring the accuracy of an ASR engine, there are a variety of factors to consider. As outlined by the FCC, “Accurate closed captions must convey the tone of the speaker’s voice and intent of the content.” Proper spelling, spacing, capitalization, and punctuation are key elements of accurate captions, as are non-speech elements like sound effects and speaker identifications.

Because ASR engines are driven by artificial intelligence, their capabilities are limited to what they’ve been taught via their programming. Despite continuing advancements, AI technology doesn’t have the same capacity for logic or understanding context as a human being. In practice, this means ASR transcripts are notoriously riddled with inconsistencies in spelling and grammar and often completely omit relevant non-speech elements.

However, not all ASR engines are created equal. 3Play Media’s report on the state of ASR evaluated the performance and accuracy of 10 engines in captioning and transcribing pre-recorded content. We uncovered that some engines are better suited for particular content, which adds nuance to possible use cases for ASR generated captions. Only two out of 10 engines produced output measuring over 95% accuracy, which is impressive, but still not independently sufficient to produce accessible captions.

The Impact of ASR Inaccuracy

The repercussions of inaccurate captions may reach further than you think. People with disabilities and their families wield spending power in the billions, but their willingness to spend drops significantly when online experiences are inaccessible. With the 2023 WebAIM Million Report finding accessibility failures on over 96% of website home pages, this represents a real gap in potential revenue streams.

Not only do low-quality captions make content inaccessible, they can have a negative impact on your user experience across the board. The limitations of ASR make their transcripts more susceptible to substitution errors, hallucinations (text without audio basis), and formatting errors—which can confuse your audience and the algorithm. Further, video transcripts have an impact on SEO, which is an essential aspect of many brand marketing strategies.

Search engines rely on text associated with video content in order to index and rank results appropriately. This makes transcripts and caption files some of the strongest contributors to a site’s keyword density and relevant search rankings. If your brand relies solely on automatically generated transcripts, errors could bog down your search strategy. Incorrect long-form queries and keywords create a disconnect between you, your target audience, and their engagement potential.

On top of the technical disadvantages, presenting poor-quality captions calls your whole brand into question. In the UK, 59% of consumers report that spelling errors and bad grammar would make them doubt the quality of services being offered. In other words, inaccurate captions undermine your marketing efforts and erode the confidence of your audience.

How to Use ASR Wisely

ASR is an essential tool for creating closed captions efficiently at scale. ASR-generated transcripts streamline captioning by providing a foundational first step for human editors to review. This eliminates the need for the manual timecode association, which is typically the most time-consuming part of caption production. So the combination of professional human transcriptionists and technology makes for a more efficient quality assurance process, while keeping costs low for customers.

3Play’s patented process combines the best of both worlds to create highly accurate transcripts and media accessibility services. Our transcriptionists undergo a rigorous certification process to ensure their review and quality assurance is up to par. In tandem with top-of-the-line ASR technology, this allows us to guarantee an average measured accuracy of 99.6%.

To make video accessibility easy, we integrate with popular video platforms like Brightcove to make it work where you already do. In addition to making content accessible and keeping up with compliance, the integration between 3Play and Brightcove increases the value of your video investment with one click.